🟥 Maximally Disagreeing Images CKA-Weighted Multi-Scale Divergence for Red Team Stimulus Selection

Abstract

We present a GPU-accelerated pipeline for the Red Team track of the ICLR 2026 Re-Align Challenge. Starting from a diverse set of vision models selected via a vectorised Centered Kernel Alignment (CKA) matrix, we identify 1,000 images from the ImageNet validation split that produce maximally divergent representations across models. Divergence is scored via CKA-weighted pairwise cosine distances in a multi-scale randomised-PCA embedding space, and final image rankings are obtained by fusing scores across PCA scales through rank aggregation. The entire pipeline runs on a single H100 GPU.

1. Introduction

The Re-Align Red Team challenge asks: which images cause the greatest disagreement in internal representations across vision models? Answering this requires both a scalable inter-model similarity metric and an efficient per-image disagreement score. We adopt Centered Kernel Alignment (CKA) as our similarity metric—consistent with the challenge evaluation protocol—and use it both to select a diverse reference set of models and to weight pairwise disagreements when scoring images.

2. Methods

2.1 Feature Extraction

For each model, activations are extracted from the designated layer using a forward hook registered via timm. Images are preprocessed with standard ImageNet normalisation (resize 256, centre-crop 224) and inference runs under torch.amp.autocast on a single H100. GPU memory is explicitly freed after each model. All images are drawn exclusively from the ImageNet validation split.

For CKA estimation, 2,048 images are subsampled. For divergence scoring, the full ImageNet validation set (~50k images) is used.

2.2 Vectorised CKA Matrix

We use the unbiased HSIC estimator:

\[\text{HSIC}(K, L) = \frac{\operatorname{tr}(KL) + \dfrac{(\mathbf{1}^\top K \mathbf{1})(\mathbf{1}^\top L \mathbf{1})}{(n-1)(n-2)} - \dfrac{2\,(K\mathbf{1})^\top(L\mathbf{1})}{n-2}}{n(n-3)}\] \[\text{CKA}(K, L) = \frac{\text{HSIC}(K, L)}{\sqrt{\text{HSIC}(K,K)\cdot\text{HSIC}(L,L)}}\]All $K$ centred Gram matrices $G_i \in \mathbb{R}^{n \times n}$ are stacked into a single tensor $\mathbf{G} \in \mathbb{R}^{K \times n \times n}$. The three required pairwise quantities are computed in a fixed number of GPU kernel launches:

-

Pairwise traces: $T_{ij} = \operatorname{tr}(G_i G_j)$ via

einsum("imn,jmn->ij", G, G) - Row-sum cross-products: $R_{ij} = (G_i\mathbf{1})^\top(G_j\mathbf{1})$ via row-sum vectors and a single matmul

- Scalar outer products: $S_{ij} = (\mathbf{1}^\top G_i \mathbf{1})(\mathbf{1}^\top G_j \mathbf{1})$

2.3 Reference Model Selection

A diverse reference set of 20 models is selected greedily from the full model suite to maximise collective representational dissimilarity. At each step the candidate with the lowest cumulative CKA to the already-selected set is added. A running vector cum_sim $\in \mathbb{R}^K$ is updated with a single $O(K)$ addition per step, yielding $O(K^2)$ total complexity. The seed is the model with the lowest mean-row CKA globally. This set is then used as the reference for all downstream divergence scoring.

2.4 Randomised GPU PCA

Full-dataset features for each reference model are reduced to PCA embeddings at three scales $d \in {16, 32, 64}$ using a randomised SVD implemented in PyTorch, avoiding materialisation of the full $D \times D$ covariance matrix:

- Random projection: $Y = X\Omega$, $\Omega \in \mathbb{R}^{D \times (d+10)}$

- QR: $Q, _ = \operatorname{QR}(Y)$

- Small SVD: $U, \Sigma, V^\top = \operatorname{SVD}(Q^\top X)$

- Projection: $X_r = X V_{:d}$, then L2-normalised

Peak GPU memory is $O(Nk + kD)$, typically 1–2 GB for ImageNet-scale features on the H100.

2.5 CKA-Weighted Per-Image Disagreement Score

For a fixed scale $d$, all reference model embeddings are stacked into $\mathcal{V} \in \mathbb{R}^{M \times N \times d}$. For each image $x$:

\[\text{score}(x) = \sum_{i < j} w_{ij} \cdot \bigl(1 - \cos(\mathbf{v}_i(x),\, \mathbf{v}_j(x))\bigr)\]where $w_{ij}$ is the normalised CKA similarity between models $i$ and $j$. Upweighting high-CKA pairs means that images are ranked highly when they cause divergence among representationally similar models—disagreements that cannot be explained by architectural idiosyncrasy alone. Scoring is computed in chunked GPU einsum operations (512 images per chunk) with a single host-to-device transfer per scale.

2.6 Rank Fusion Across Scales

Per-scale disagreement scores are converted to ranks, and the mean rank across the three PCA scales is used as the final score. This makes the ranking robust to scale-specific distributional differences without requiring commensurable score magnitudes.

2.7 Greedy Diverse Image Selection

From the top-5,000 candidates by fused rank score, 1,000 images are selected greedily by combining divergence score and diversity:

\[\text{combined}(x) = (1-\lambda)\,\hat{s}(x) + \lambda\,\hat{d}(x), \quad \lambda = 0.3\]where $\hat{s}$ is the min-max normalised divergence score and $\hat{d}$ is the min-max normalised minimum cosine distance to the already-selected set in a mean-model proxy feature space.

3. Experimental Setup

| Hyperparameter | Value |

|---|---|

| Hardware | 1× NVIDIA H100 (80 GB) |

| Image dataset | ImageNet validation split |

| Images for CKA estimation | 2,048 |

| Batch size | 256 |

| Reference models | 20 |

| PCA scales $d$ | 16, 32, 64 |

| GPU scoring chunk size | 512 |

| Diversity weight $\lambda$ | 0.3 |

| Candidate pool | Top-5,000 |

| Final images selected | 1,000 |

4. Results

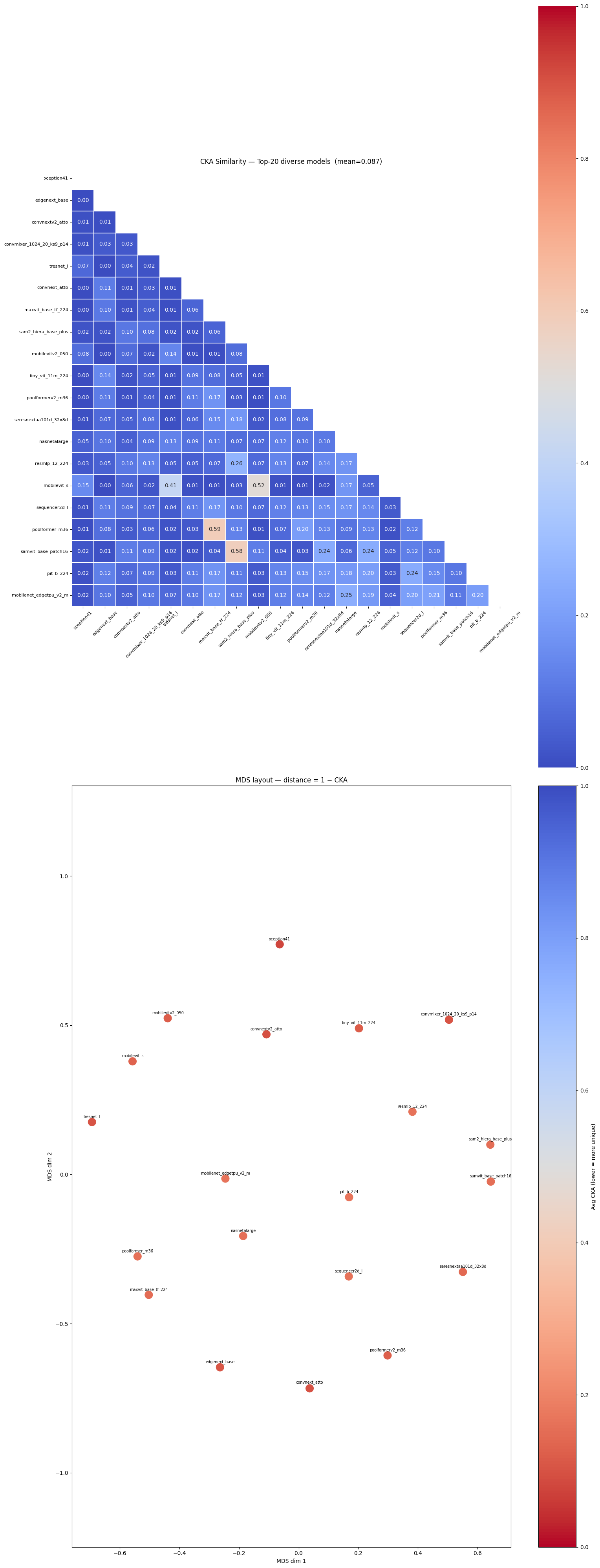

4.1 Reference Model Set

The greedy selection procedure produced a set of 20 models with a mean pairwise CKA of 0.087. Most pairwise similarities fall below 0.15, with only a small number of pairs exceeding 0.4, confirming low representational overlap across the selected set.

4.2 Image Divergence Scoring

Figure 1 (top row) shows that the per-image disagreement distributions are approximately unimodal and centred near 0.5 across all three PCA scales, with the score range slightly expanding as $d$ increases. The Spearman $\rho$ between scale pairs (Figure 1, bottom centre) is low across all pairs, with only PCA-16 vs. PCA-64 reaching approximately 0.15 and the remaining pairs near zero. This low inter-scale agreement motivates the rank-fusion step: no single scale dominates, and the fused score integrates complementary signals from all three. The top-1000 threshold falls in the right tail of the fused distribution (Figure 1, bottom left). The top-1000 score curve by rank (Figure 1, bottom right) shows a smooth decay from ~47,000 to ~37,000, indicating no abrupt cliff between selected and excluded images.

Figure 1. (Top row) Per-image weighted cosine distance distributions for PCA-16, PCA-32, and PCA-64. (Bottom left) Fused rank score distribution with top-1000 threshold marked. (Bottom centre) Spearman $\rho$ between scale pairs. (Bottom right) Top-1000 fused score by rank.

5. Discussion

Low inter-scale correlation. The near-zero Spearman $\rho$ between most scale pairs (Figure 1) shows that different PCA dimensionalities capture non-overlapping aspects of representational disagreement. This makes rank fusion essential rather than cosmetic—any single scale would leave signal on the table.

CKA-based pair weighting. Upweighting high-CKA pairs focuses the score on images that challenge models with otherwise similar representations. Such disagreements are more likely to reflect genuine ambiguity in the image content rather than incidental differences in model architecture.

Limitations. CKA is estimated on a 2,048-image subsample; larger samples would improve accuracy. The greedy final selection approximates the NP-hard maximum-diversity problem.

6. Conclusion

We describe a single-GPU pipeline for Red Team stimulus selection. A vectorised CKA matrix, randomised GPU PCA at three scales, CKA-weighted pairwise disagreement scoring, and rank fusion together identify 1,000 ImageNet validation images that maximise representational disagreement across a diverse reference set of vision models, all running on a single H100.

References

- [Kornblith et al., 2019] Kornblith, S., Norouzi, M., Lee, H., & Hinton, G. Similarity of neural network representations revisited. ICML 2019.

- [Nguyen et al., 2021] Nguyen, T., Raghu, M., & Kornblith, S. Do wide and deep networks learn the same things? ICLR 2021.

- [Halko et al., 2011] Halko, N., Martinsson, P.-G., & Tropp, J. A. Finding structure with randomness: Probabilistic algorithms for constructing approximate matrix decompositions. SIAM Review, 53(2).