🟦 Maximizing Alignment via the Densest k-Subgraph

Introduction

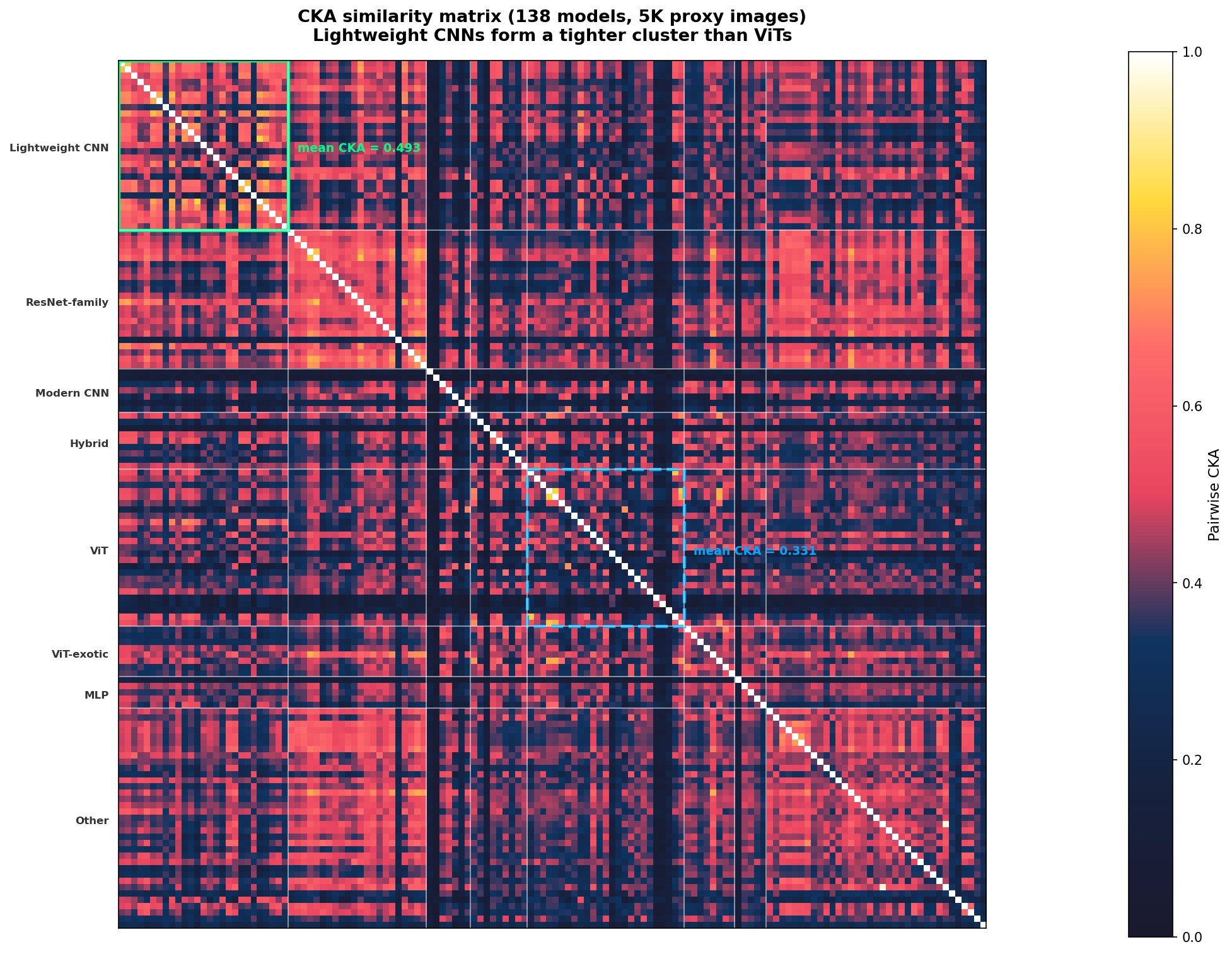

The Blue Team track asks: which 20 vision models share the most aligned internal representations? Alignment is measured using mean pairwise Centered Kernel Alignment (CKA) timm library

Selecting 20 models from a registry of 141 to maximize mean pairwise CKA is the weighted Densest $k$-Subgraph (DkS) problem

Theoretical motivation

Architecture family forms distinct CKA clusters. Sirikova & Chan’s “Triangle of Similarity” framework established that architectural family is the primary determinant of representational similarity

Our initial hypothesis was that Vision Transformers (ViTs) would form the tightest cluster, since self-attention produces uniform representations across layers

Methodology

Multi-resolution CKA proxy matrices

We computed CKA proxy matrices at two resolutions:

-

V1: 1,000 proxy images from ImageNet

validation (138 models successfully extracted) - V2: 5,000 stratified proxy images (2,500 ImageNet-val + 2,500 ObjectNet), same 138 models

Both matrices were computed on GPU by extracting embeddings through all registry models, computing centered Gram matrices, and measuring pairwise cosine similarity of flattened Gram vectors.

The optimizer ensemble

We solved the DkS problem using a four-stage optimization pipeline:

- Spectral rounding: Extracted leading eigenvectors of the CKA matrix $S$ and performed randomized rounding over linear combinations of the top-$r$ eigenspaces (1,000+ random projections across $r \in {1, 2, 3, 5, 10, 20, 30, 50}$).

- Greedy search: Seeded with the globally highest-CKA pair, iteratively adding the model that maximized marginal gain in total CKA.

- Local search: Steepest-ascent 1-swap hill climbing (20,000 iterations) to polish initial solutions.

- Simulated annealing: 2,000,000 SA iterations with geometric cooling, proposing 1-swap neighborhood moves.

What actually won: lightweight CNNs, not ViTs

The ViT-only strategy, which our literature review predicted would win, scored 0.4933 on V1 and 0.3923 on V2. It was not close.

The optimizer, running without architectural constraints, converged on a cluster of small, lightweight CNN and mobile architectures. The V2-optimized set (the submission that ultimately led) includes DenseNet, DLA, GhostNet, MobileOne, RegNet, RepVGG, VGG, and similar models. These are architecturally simple models with limited capacity, and they appear to develop highly similar internal representations precisely because they lack the representational diversity of larger or more exotic architectures.

The V1-optimized set (a different cluster of larger ResNet-family CNNs with SE/SK attention) scored higher on the V1 proxy but lower on V2. We submitted both.

Submitted models

Our leading submission (nathan-blueberry / nathan-strawberrrry) uses the V2-optimized lightweight CNN set:

densenet121, densenetblur121d, dla102, ecaresnetlight, efficientvit_b0, ghostnet_100, ghostnetv2_100, hardcorenas_a, hgnetv2_b0, inception_next_atto, lcnet_050, mobileone_s0, regnetx_002, regnety_002, repghostnet_050, repvgg_a0, resnetblur50, semnasnet_075, skresnet18, vgg11

Results

| Submission | Proxy V1 | Proxy V2 | Actual score |

|---|---|---|---|

| Lightweight CNN (V2-optimized) | 0.6750 | 0.6492 | 0.7587 |

| ResNet-family (V1-optimized) | 0.6973 | 0.6310 | 0.7363 |

| ViT-only | 0.4933 | 0.3923 | not submitted |

The lightweight CNN set scored 0.7587, placing 1st on the Blue Team leaderboard.

Blue Team leaderboard (as of Feb 26, 2026)

| Rank | Submitter | Score |

|---|---|---|

| 1 | nathan-blueberry (us) | 0.7587 |

| 2 | nathan-strawberrrry (us) | 0.7587 |

| 3 | m1 | 0.7508 |

| 4 | s1 | 0.7506 |

| 5 | ns1 | 0.7506 |

| 6 | me1 | 0.7506 |

| 7 | cl-9 | 0.7503 |

| 8 | pl-5 | 0.75 |

| 9 | pl-2 | 0.75 |

| 10 | m1b | 0.7497 |

| … | ||

| 69 | baseline | 0.5557 |

Discussion

The most interesting finding is that our theoretical prediction was wrong. The literature strongly suggested ViTs would form the tightest CKA cluster, but small CNNs won by a wide margin. We think the explanation is straightforward: lightweight models have so little capacity that they’re forced into similar solutions, while ViTs have enough representational headroom to diverge from each other despite sharing the same attention mechanism. This is consistent with prior work showing that larger-capacity networks develop distinctive block structure in their representations that smaller networks lack

The proxy-to-actual ratio is roughly 1.17x (V2 proxy of 0.6492 mapped to actual 0.7587), which suggests our 5,000-image proxy underestimates true alignment. This is consistent with the proxy images introducing noise that the hidden evaluation set does not contain.

The optimizer, unconstrained by our architectural assumptions, found what the theory missed: representational alignment is maximized not by architectural homogeneity at the mechanism level (attention vs. convolution), but by capacity constraints that force models into similar solutions regardless of their specific architecture.