🟥 Maximizing Representational Divergence via Semantic Restriction

Introduction

The Red Team track requires selecting 1,000 images that cause representations across ~141 vision models

A naive approach might select highly diverse or anomalous images to “confuse” models. This is wrong. CKA computes the cosine similarity of vectorized, doubly-centered Gram matrices

The core mathematical insight

Let the embedding matrix $X \in \mathbb{R}^{n \times d}$ decompose as $X = M + W$, where $M$ captures between-class means and $W$ captures within-class deviations. After centering, the Gram matrix becomes:

\[K_c = H(MM^T + MW^T + WM^T + WW^T)H\]When images span $k$ well-separated classes, the $HMM^TH$ term dominates. When $k = 1$ (all images from one class), $M$ reduces to a single point that the centering matrix $H$ maps to zero. The centered Gram becomes $K_c = HWW^TH$, depending entirely on within-class variation.

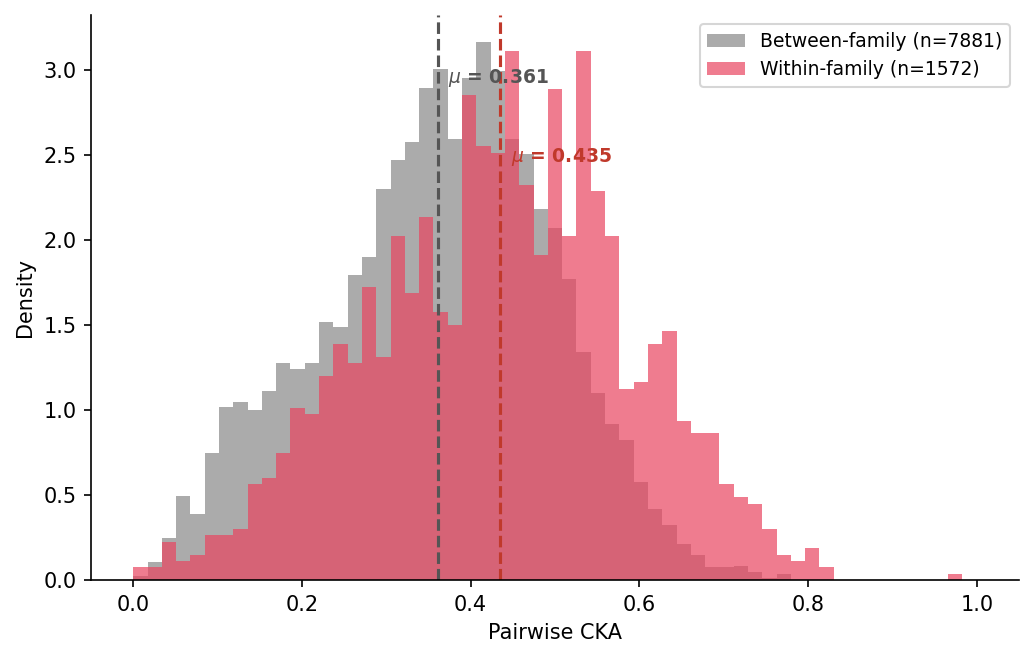

Within a single fine-grained superclass, different architectures genuinely disagree: CNNs organize by local texture and background statistics

Methodology

Stimulus selection: dog breeds

We selected domestic dogs and wild canids as the target superclass: 118 dog breeds plus 7 wild canids from ImageNet validation, yielding 6,250 candidate images. This provides high within-class variation (breed, pose, lighting, background) while maintaining strict semantic uniformity.

Proxy models (V3 pipeline)

We used 25 proxy models spanning all major architecture families and resolution regimes:

- 4 pure CNNs (VGG, ResNet, DenseNet, ConvNeXt) at 224px

- 4 pure ViTs (DeiT3, BEiT, XCiT, EVA02) at 224px

- 2 vision-language (Apple MCLIP, AIMv2) at 224px

- 2 self-supervised (DINO, MAE) at 224px

- 5 hybrid/exotic (MambaOut, MLP-Mixer, MaxViT, DaViT, FocalNet) at 224px

- 8 resolution-diverse models spanning 176px to 384px (Wide ResNet, NFNet, FlexiViT, FastViT, ResNeSt, EfficientNetV2, CaiT, Swin)

The resolution-diverse set was added in V2/V3 to close the generalization gap we observed in V1, where all proxies used 224px crops but the evaluation pool includes models at 160px-1024px.

Optimization pipeline

- Embedding extraction: All 6,250 dog images embedded through 25 proxy models on GPU (~1 hour).

- Divergence scoring: Each image scored by cross-model representation divergence. Top 5,000 retained as candidates.

- Gram matrix precomputation: 25 centered Gram matrices of size 5000x5000 (~2.5 GB total).

- Greedy initialization: Seeded with the top 200 images by divergence score, then greedily added images that most reduced mean CKA.

-

Vectorized simulated annealing

: 3 restarts of 200,000 iterations each. We maintain a stacked $(M, N, N)$ sub-Gram array with vectorized row/column updates and pre-allocated work buffers for zero-allocation CKA evaluation. Per-iteration cost is $O(MN)$ rather than $O(MN^2)$, achieving ~7-18 it/s depending on GPU. - Local search polish: Steepest-descent 1-swap refinement after each SA restart (up to 1,000 iterations, sample 30-50 candidates per position).

Checkpointing

The cluster jobs were repeatedly preempted by Slurm. We added a checkpoint system that writes the best selection to persistent storage every 10,000 SA iterations and on SIGTERM, allowing runs to resume from where they left off.

Results

Our V3 submission was built from a checkpoint after 1 completed SA restart (200K iterations + local search polish), with a proxy CKA of 0.441 across 25 proxy models.

| Submission | Proxy models | Proxy CKA | Proxy score | Actual score |

|---|---|---|---|---|

| V1 (11 proxies, 224px only) | 11 | 0.416 | 0.584 | 0.547 |

| V3 (25 proxies, multi-resolution, partial) | 25 | 0.441 | 0.559 | 0.554 |

The V3 submission scored 0.5544, placing 1st on the Red Team leaderboard. V1 (submitted earlier as nathan-test) scored 0.5472 at rank 3.

Red Team leaderboard (as of Feb 26, 2026)

| Rank | Submitter | Score |

|---|---|---|

| 1 | nathan-tryingsomething (us) | 0.5544 |

| 2 | tehruhn_imn1000_23 | 0.5499 |

| 3 | nathan-test | 0.5472 |

| 4 | moonshine-r92 | 0.5439 |

| 5 | moonshine-93 | 0.5439 |

| 6 | moonshine-r92 | 0.5439 |

| 7 | express-double-3 | 0.5266 |

| 8 | kencan-1st-attempt | 0.5177 |

| 9 | kencan-2nd-submit | 0.5177 |

| 10 | kencan-1st-submit | 0.5177 |

| … | ||

| 72 | express-double-5 | 0.393 |

Generalization gap

The proxy score (0.559) and actual score (0.554) are much closer than in V1 (0.584 proxy vs 0.547 actual). The 25-model multi-resolution proxy set substantially narrowed the generalization gap compared to the 11-model 224px-only V1 proxy.

Interestingly, V3’s proxy CKA (0.441) is higher than V1’s (0.416), yet V3 scores better on the actual evaluation. This makes sense: V3’s proxy is a harder, more representative approximation of the true evaluation, so a slightly worse proxy score actually corresponds to a better real score.

Implications

Our result demonstrates a practical consequence of the known dataset-sensitivity of CKA

If the community wants CKA to measure genuine representational alignment rather than shared categorical knowledge, evaluation datasets should be composed of semantically uniform stimuli where coarse class structure cannot dominate the signal.